They asked if AI could save the planet

I asked: Save it for whom?

Because that question reveals everything about who gets to survive the climate crisis — and who is expected to bear its cost.

AI is being sold as the climate solution. It will optimize energy grids, predict disasters, monitor deforestation, accelerate carbon capture.

But here's what the marketing doesn't mention:

Training one large language model emits approximately 626,000 pounds of CO₂ — roughly equivalent to the lifetime emissions of five cars.

Data centers powering AI consume massive volumes of water for cooling — in regions already facing drought.

And the "solutions" being deployed often displace the very communities who have protected land for millennia with far greater success than any algorithm.

The AI promising to save nature was built by razing forests for data centers.

This is not about whether technology can help address the climate crisis. It can.

This is about whether we will design that technology with planetary accountability — or whether we will simply automate extraction at a scale that makes repair impossible.

The economics of planetary harm

Before philosophy, the balance sheet.

Because if you're a sustainability officer, CTO, or decision-maker evaluating whether "planetary AI" is worth attention, you need to see what ignorance costs.

Scenario: Mid-sized tech company deploying AI for climate modeling and optimization.

Hidden costs of ignoring planetary impact:

Regulatory penalties (emerging climate disclosure laws): £2M-£8M annually

Reputational damage (greenwashing exposure): £5M-£15M in brand erosion and customer churn

Operational risk (water scarcity disrupting data centers): £10M-£30M in downtime and relocation costs

Community resistance (displacement of Indigenous land stewards): Immeasurable — but includes legal battles, project delays, loss of social license to operate

Total conservative cost of planetary blindness: £17M-£53M+

Cost of planetary accountability infrastructure:

Environmental accounting systems (carbon, water, e-waste tracking): £200k-£500k/year

Indigenous partnership protocols (co-design, benefit-sharing): £150k-£400k/year

Climate reparations fund (% of AI profits to Global South adaptation): £300k-£1M/year

Total annual cost of Planetary AI Framework™: £650k-£1.9M

This is not charity. This is climate risk management.

It's preventing organizational collapse when water runs out, communities resist, and regulators demand accountability for ecological harm.

Carbon debt vs technical debt

Most tech organizations understand technical debt. Ship messy code, pay later with bugs and rewrites.

Planetary AI introduces a new category: carbon debt.

Every time a system trains a model without accounting for emissions, consumes water without return, or optimizes efficiency without asking "efficiency for what end?" — it takes out a loan against the planet's capacity to sustain life.

The interest is:

Climate destabilization

Water scarcity

Ecosystem collapse

Displacement of communities who depend on land

Loss of biodiversity that cannot be rebuilt

And it compounds faster than any financial debt — because planetary systems have tipping points beyond which repair becomes impossible.

By the time organizations realize the cost, the damage is irreversible.

The diagnosis: AI as symptom and potential salve

Here's the uncomfortable truth:

AI is both the problem and part of the solution.

It is a problem because its infrastructure is ecologically extractive by design:

Data centers built on cleared land

Cooling systems draining aquifers

E-waste from hardware obsolescence

Energy demands rivaling small nations

Supply chains dependent on conflict minerals

It is part of the solution because it can:

Model climate scenarios humans cannot compute

Optimize renewable energy distribution

Monitor ecosystem health at scale

Predict disaster patterns to save lives

Accelerate scientific research on carbon capture and adaptation

Your climate anxiety and the planet's exploitation are one wound — and AI is both symptom and potential salve.

So the question is not whether to use AI. The question is: Can we design AI that serves planetary liberation rather than planetary extraction?

What "green AI" gets wrong

The tech industry's response to AI's environmental impact has been "green AI" — efficiency improvements, renewable energy for data centers, carbon offsets.

These are necessary. But they are not sufficient.

Here's why:

1. Efficiency paradox (Jevons paradox)

Making AI more energy-efficient doesn't reduce total energy consumption if it enables more AI to be deployed.

You cannot optimize your way out of overconsumption.

2. Carbon offsets are accounting, not accountability

Buying carbon credits does not undo the harm of building a data center on Indigenous land, draining a local aquifer, or displacing communities.

It's like paying someone else to eat vegetables while you eat junk food, then claiming you're healthy.

3. Renewable energy does not mean zero impact

Solar panels and wind turbines require mining rare earth minerals — often extracted through violent, ecologically destructive processes in the Global South.

"Clean" energy for the Global North is often built on extraction from communities with no power to refuse.

4. The best technology might be no technology

The most effective climate solution is often the one that already exists: Indigenous land stewardship.

UN data (2024) shows that Indigenous-managed lands have 80% better biodiversity outcomes than tech-monitored conservation reserves.

The best "technology" for protecting forests is honoring the people who have protected them for millennia — not displacing them with drones and sensors.

Misrecognition precedes extraction (again)

If you read last week's essay, you know this principle: Misrecognition precedes discrimination.

Systems don't begin by harming people. They begin by failing to recognize their reality.

The same principle applies to planetary systems.

AI for climate does not begin by destroying ecosystems. It begins by failing to recognize the land, water, and non-human life as stakeholders with rights.

When a system treats:

Forests as "natural resources" to optimize

Rivers as "cooling infrastructure" to exploit

Indigenous peoples as "stakeholders to consult" (not co-designers with authority)

Non-human species as "biodiversity metrics" (not beings with intrinsic worth)

It has already misrecognized the relationships that sustain life.

The extraction follows inevitably.

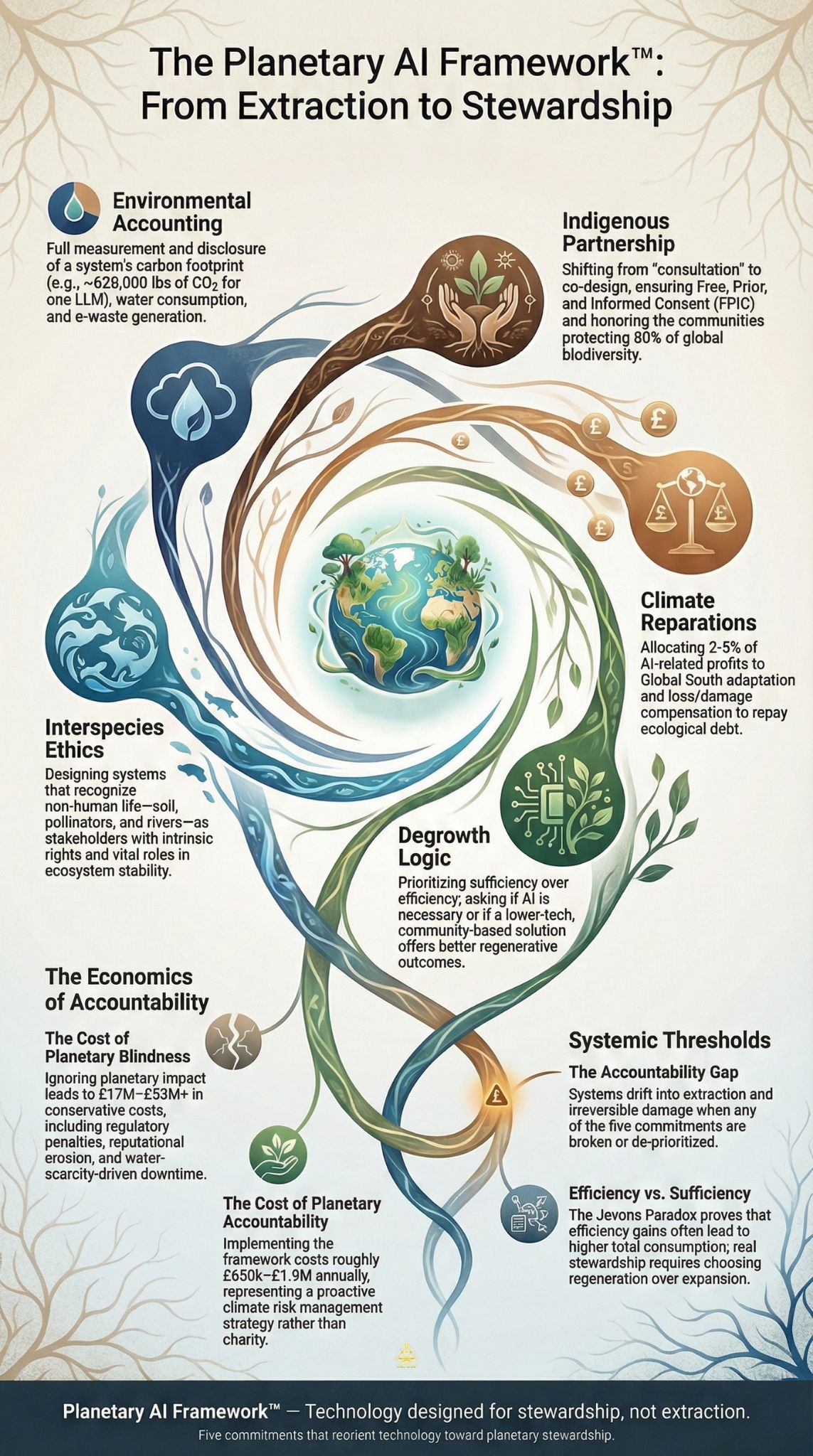

The Planetary AI Framework™

If extraction is the default, what replaces it?

Not regulation alone. Not carbon credits. Not efficiency gains.

What replaces it is infrastructure — built to hold planetary accountability as a non-negotiable condition for deployment.

I call this The Planetary AI Framework™, and it rests on five interdependent commitments:

1. Environmental Accounting

AI's full ecological cost must be measured and disclosed: energy consumption, water use, e-waste generation, supply chain impacts.

Engineering specification:

Every AI system should publish an Environmental Impact Statement:

json

{

"system_name": "Climate Modeling Platform v3.2",

"carbon_footprint_training": "450,000 kg CO2e",

"carbon_footprint_inference": "12,000 kg CO2e annually",

"water_consumption": "2.5 million liters annually",

"e_waste_generation": "850 kg hardware per 3-year cycle",

"supply_chain_minerals": ["cobalt", "lithium", "rare earths"],

"supply_chain_origin": ["DRC", "Chile", "China"],

"mitigation_strategy": "100% renewable energy by 2027; water recycling system operational",

"offset_transparency": "Carbon credits from reforestation projects in Kenya (verified by Gold Standard)",

"accountability_contact": "[email protected]"

}This is not optional. This is minimum viable planetary accountability.

If you cannot measure your ecological cost, you cannot claim to be building climate solutions.

2. Indigenous Partnership

Indigenous peoples manage 80% of the world's biodiversity on 22% of the land.

They are not "beneficiaries" of AI climate solutions. They are the original technologists of land stewardship.

Design principle:

AI for climate must be co-designed with Indigenous communities — not deployed on their land without consent.

This means:

Free, prior, and informed consent (FPIC) as baseline, not aspiration

Benefit-sharing agreements where AI profits fund community priorities

Data sovereignty — Indigenous communities control data about their land

Traditional knowledge protection — AI does not extract Indigenous knowledge without permission and compensation

Veto power — If a community says no, the project does not proceed

The objection: "This slows everything down."

Yes. It does.

Because the alternative is displacing the people who have protected ecosystems successfully for millennia in order to deploy technology that may or may not work — and will definitely cause harm.

Speed without consent is just colonialism with a climate marketing budget.

3. Climate Reparations

AI companies profiting from climate modeling, carbon markets, and "green tech" must fund climate adaptation in the Global South.

Implementation:

A percentage of AI-related profits (recommended: 2-5%) allocated to:

Climate adaptation infrastructure in vulnerable regions

Loss and damage compensation for communities already experiencing climate catastrophe

Technology transfer to Global South communities (not just selling them products)

This is not charity. This is ecological debt repayment.

The Global North built its wealth through carbon-intensive industrialization that caused the climate crisis.

The Global South bears the majority of climate impacts despite contributing the least to emissions.

AI built to "solve" this crisis cannot profit from the same extraction logic that caused it.

4. Degrowth Logic

Efficiency is not the goal. Sufficiency is the goal.

The question is not "How can we make AI more efficient so we can deploy more of it?"

The question is: "Do we need this AI — or is there a lower-tech, community-based solution that works better?"

Practical application:

Before deploying AI for climate, ask:

What problem is this solving?

For whom is this problem being solved?

What are the non-AI alternatives?

What is the total ecological cost of deployment?

What is the total ecological cost of NOT deploying?

If we build less technology and preserve more ecosystems, would we achieve better outcomes?

Degrowth does not mean collapse. It means choosing regeneration over endless expansion.

It means recognizing that the planet cannot sustain infinite growth — and neither can we.

5. Interspecies Ethics

AI deployment must consider non-human stakeholders: forests, rivers, oceans, wildlife, soil ecosystems, pollinators, apex predators.

This sounds abstract. It is not.

Practical example:

An AI system deployed to optimize agricultural yields might recommend:

Monocropping (ecologically destructive)

Pesticide intensification (kills pollinators)

Water extraction beyond sustainable rates (depletes aquifers)

An AI system designed with interspecies ethics would ask:

How does this impact soil health over 50 years?

What happens to pollinator populations?

What happens to the river downstream?

Who — human and non-human — bears the cost of this optimization?

Interspecies ethics is not mysticism. It is systems thinking that accounts for interdependence.

When bees die, crops fail. When rivers die, communities die. When forests die, the climate destabilizes.

AI that ignores these relationships is not intelligent. It is ecologically illiterate.

The "we can't afford planetary accountability" objection

The predictable response:

"This sounds great in theory, but we're in a race. Climate crisis is urgent. We can't afford to slow down for co-design and reparations while the planet burns."

Let's address this directly.

First: Urgency without consent recreates extraction

The logic of "we don't have time for Indigenous consent because the crisis is urgent" is the same logic that caused the crisis.

Colonialism justified extraction as progress. Climate urgency justifies extraction as necessity.

Both ignore that the communities being displaced are the ones who have successfully protected ecosystems that tech-driven conservation has failed to preserve.

If your climate solution requires displacing land protectors, it is not a climate solution. It is climate colonialism.

Second: Fast failure is not better than slow success

Deploying ecologically harmful AI quickly does not solve the climate crisis. It accelerates it.

Example:

A company builds a massive AI-powered carbon capture facility.

It requires:

A data center consuming 50 million liters of water annually (in a drought-prone region)

Energy equivalent to a small city

Rare earth minerals extracted through ecologically destructive mining

The facility captures carbon. But the net ecological cost exceeds the benefit.

You have not solved the crisis. You have created a new one.

Slow, community-based regeneration would have been more effective — but it does not generate venture capital returns.

Third: The startup objection

"A 3-person climate-tech startup can't afford Indigenous partnership protocols and reparations funds."

Fair point. But you don't need full infrastructure on day one.

What you need is the design principle from the start:

Commit to FPIC before deploying on Indigenous land (cost: due diligence, not millions)

Log environmental impact from prototype stage (cost: tracking, not infrastructure)

Allocate 1-2% of revenue (not profit) to climate reparations fund (cost: scales with growth)

The costly parts scale with your revenue.

A £500k seed-stage startup allocates £5k-£10k. A £50M Series B company allocates £500k-£1M.

The architecture is the same. The resource allocation scales.

What you can do Monday morning

This essay should not end as inspiration without application.

Here are three moves your organization can make immediately:

1. Audit: Calculate your AI's carbon footprint

Tool: ML CO₂ Impact Calculator (open source, free)

Input:

Model size

Training duration

Hardware type

Energy grid carbon intensity

Output:

Total CO₂ emissions

Equivalent in car miles, flights, trees needed to offset

This takes 10 minutes. Do it before your next model deployment.

2. Reframe: Ask the degrowth question

Before building new AI infrastructure, ask:

"Is this AI necessary — or is there a lower-tech, community-based solution that works better?"

If the answer is "AI is faster/cheaper," ask the follow-up:

"Faster and cheaper for whom? And who bears the ecological cost?"

3. Action: Support one Indigenous-led climate initiative

Find one climate justice or Indigenous land stewardship organization.

Fund them. Amplify them. Learn from them.

Not as charity. As recognition that they are the original experts in the technology you claim to be building.

Why this matters now

The climate crisis is not coming. It is here.

And the technology being deployed to address it is replicating the same extraction logic that caused it.

We are building AI to monitor deforestation — while clearing forests for data centers.

We are building AI to optimize water use — while draining aquifers to cool servers.

We are building AI to "save nature" — while displacing the people who have protected it for millennia.

This is not hypocrisy. This is systemic design.

And it will continue until we build accountability into the architecture — not as an add-on, but as a foundation.

The research is clear

Claim: AI's carbon footprint rivals aviation. Evidence: Nature (2023) — Training one large language model emits ~626,000 lbs of CO₂. Translation: "Green AI" marketing obscures environmental harm.

Claim: Indigenous land stewardship outperforms tech-driven conservation. Evidence: UN (2024) — Indigenous-managed lands show 80% better biodiversity outcomes than tech-monitored reserves. Translation: The best "technology" is honoring those who've protected the land for millennia.

These are not opinions. These are measurable realities.

And they demand a response that goes beyond efficiency tweaks and carbon offsets.

Echo line

AI can serve liberation — or extraction.

The planet is watching which one we choose.

Because every line of code either remembers that Earth is stakeholder — or treats it as resource.

Every algorithm either honors the communities who have protected land for millennia — or displaces them in the name of progress.

Every data center either accounts for the water it consumes and the carbon it emits — or externalizes that cost onto communities with no power to refuse.

What would technology look like if it honored Earth as stakeholder, not resource?

It would look like the Planetary AI Framework™:

Environmental accounting (full ecological cost, transparently disclosed)

Indigenous partnership (co-design with consent and veto power)

Climate reparations (profits fund adaptation in the Global South)

Degrowth logic (build less, preserve more)

Interspecies ethics (non-human life as stakeholder, not metric)

This is not idealism. This is planetary survival.

And it begins with recognizing that the crisis we are trying to solve was caused by the same logic we are using to solve it.

If we want different outcomes, we need different architecture.

Planetary AI Framework™ is that architecture.

If this essay stirred something, you don't need to resolve it now

You don't need to follow a link to honor this work. Sitting with the question is already participation.

If you want gentle orientation, the Canon Primer™ offers context without commitment.

If this raised questions about climate-tech you're building, Planetary Accountability Audit™ maps ecological cost with precision.

And if you're exhausted from watching "green AI" replicate extraction, the Coherence Test™ can help you see whether the issue is technical — or systemic.

Everything else can wait.

🌀 Saige Jarell (Kwabena) Bempong Founder, The Intersectional Majority Ltd Architect of ICC™, Bempong Talking Therapy™, and Saige Companion™ AI Citizen of the Year (2025)

About the author

Jarell (Kwabena) Bempong is a systems thinker, therapist, and AI ethics architect working at the intersection of identity, power, and repair. He is the founder of The Intersectional Majority Ltd and the creator of Bempong Talking Therapy™, Intersectional Cultural Consciousness™ (ICC™), and Saige Companion™ — a consent-bound AI continuity framework designed to support reflection without surveillance.

His work focuses on how modern systems reproduce harm through structural amnesia, and how repair becomes possible when memory, accountability, and return are designed into infrastructure rather than demanded from individuals. Jarell's frameworks are used across mental health, leadership, technology, and organisational design contexts, without pathologising survival or extracting vulnerability as data.

He is the author of The Intersect™, a weekly essay series on systems, identity, and liberation; AI Citizen of the Year (2025); and an award-winning innovator in culturally grounded, trauma-aware systems redesign.

🔮 Next in The Intersect™

The Infrastructure of Refusal: When Communities Say No to AI

If Planetary AI asks technology to remember Earth is stakeholder, Week 37 asks what happens when communities exercise veto power.

What does Free, Prior, and Informed Consent look like in AI deployment? Who has the authority to refuse infrastructure? And what changes when refusal is designed into systems — not treated as obstruction?

From data centres to predictive policing, from climate tech to public services, we examine what ethical AI governance requires when communities say:

“No.”